The Critical Role of Data Backup Security in Ransomware Defense

Dislexic Engineer trying to simplify cybersecurity.

The lifecycle of a data backup needs to be secured more and is often neglected. Having a data backup that is reliable and immutable is important for protecting yourself against ransomware attacks. The responsibility of security does not end when you have active processes, which take regular backups of your systems and export to some storage, which you can access later in case your systems are corrupted or attacked.

“I have hourly data backups of my VM – I am now protected and can restore data if something goes wrong”

Really? Various things need to be taken care of before you say a backup strategy is “reliable”.

First, let’s see a few scenarios that will fit into “something goes wrong” but are not handled by weak backup strategies;

Few ransomware attacks

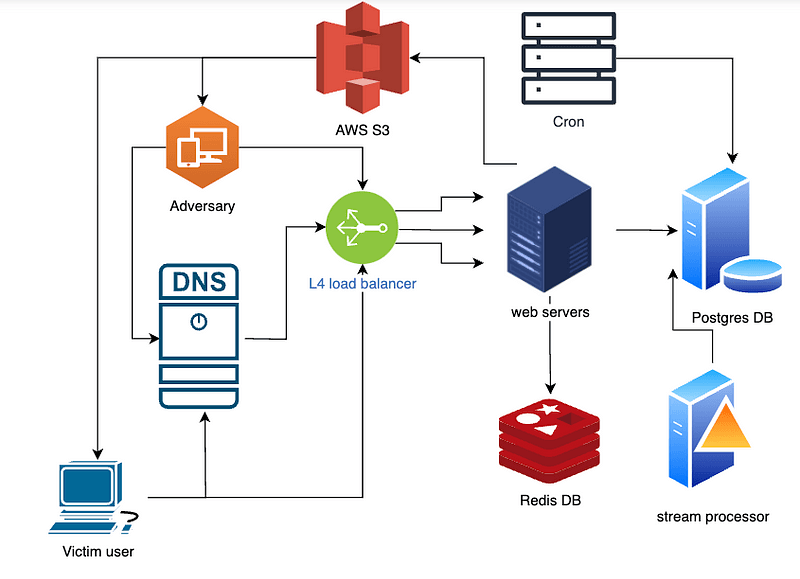

In 2017, a vulnerability in SMB protocol implementation in Windows(Eternalblue) gave way to the Wannacry ransomware. Though the MO of the attack is common ransomware, the ability to spread across the network and its being a zero-day make it more dangerous.

People who had backups of their data (at least the important ones) outside the infected network or on the cloud were relatively safe.

Okay...

Ragnar Locker 2019, Locky, Crypto, and Ryuk ransomwares are a bit special. Once initiated, it deletes any existing backups, encrypts data, and then displays the ransom note.

Virginia’s largest provider of orthopedic medicine and therapy, OrthoVirginia, was hit with a Ryuk ransomware attack that disabled access to workstations, imaging systems needed for scheduled surgeries, backed-up data and more.

This makes us wonder; even if you are okay with the data being leaked, how can you restore data from a targeted ransomware attack that deletes or obfuscates your precious data backups?

You can't.

(considering your WAL segments are also corrupted or are victims of the attack)

Keep an eye on software that says “Ransomware proof” or “100% secure” as it is not practical. Security is the responsibility of the software as well as the admin and user

Let’s see a few backup strategies and discuss their security aspect.

[there can be various other solutions. If you have one better, I am genuinely interested in discussing them in the comments]

Data backup strategies

Method 1: Backing up to a local disk

This is a well-known and not-so-great backup strategy. Your backup agent or a script that takes the backup of your data regularly saves the backup files to a local disk. To access the backup files, you plan to SSH into the server running the backup agent.

Fig 1. Backing up data to a local disk

In this case, a lot of things can fit into “something goes wrong”

Data redundancy is ignored here. If the server containing the backups dies, there goes our data backups as well.

If the data source is compromised, since the backup server has direct access to it, there is a high chance that the backup server gets attacked as well. [This vulnerability depends on the connection between the two services]

If the backup server is compromised, the attacker can not only encrypt your data source but also delete all the backups thus making your data recovery impossible.

Let’s upgrade this setup a bit to add some security and redundancy

Method 2: Backing up to remote distributed storage

In this case, the data backup files are stored on a NAS or some cloud storage with replication factor 3(can be more). We will not go into NAS backup(NDMP) and NAS gateway in this article, and there are more things to take care of if your data is in PB.

Fig 2. Backing up to a distributed storage. The NAS ACL only allows creating backups.

Now you can tolerate some node failure of your distributed NAS. Maintaining strict ACL for the NAS, you assign only the required permissions to the backup manager so that it can only upload the backup files and not update, overwrite, or delete any backups that already exist. Even if the VM running the backup manager is compromised and your backups stop being generated, you still have previous backups.

Though this setup works for some, it is not secure enough.

The backups flow unencrypted between the source, backup manager, and storage manager.

There are no checks to verify if your data backup’s integrity

What about the 3-2-1 rule?

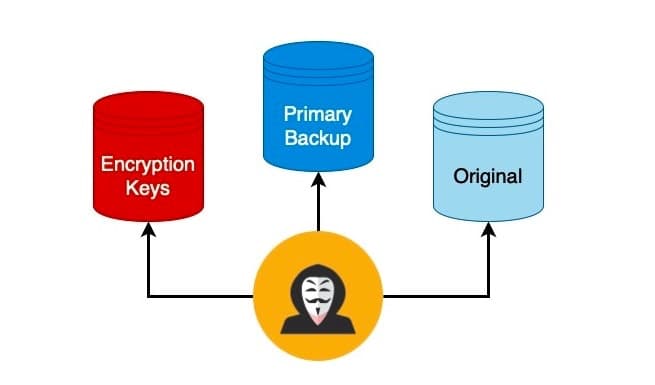

Method 3: Backing up to a reliable data store that is fault-tolerant and immutable

We have upgraded our last setup with a few things; first is the 3-2-1 rule, where we create three copies of the data(including the original) and store them on two different types of storage types, and another copy on offsite isolated storage.

Fig 3. Backing up to a reliable data store that is fault-tolerant and immutable

The data is encrypted at multiple stages;

First, the data is encrypted at rest(this is at source)

While in transit during the whole backup management lifecycle

The private keys used for encryption should be managed carefully and must have strict ACL for accessing them.

A Hardware Security Module (HSM) should be used here for offloading encryption, decryption, and key management. Since the private keys reside in the HSM and you use its APIs to interact with the functions, there is less chance your encryption keys get leaked or stolen. Of course, the ACL of the HSM clusters should be managed carefully, and the least access principle should be followed when creating users that use HSM functions.

Security efficiency through the "Pyramid of Difficulty"

The access policy at every stage in the data backup’s lifecycle should only allow the data to be replicated or forwarded to its next location. The backup manager should only have enough permissions to snapshot or stream the data from the source, and should not be able to delete or make any changes to the data source.

Similarly, all stages must have appropriate least permissions to distribute and securely store the data.

The compartmentalization and role segregation for handling the data will not make your systems 100% secure but will reduce the attack surface greatly. The effectiveness of your security strategy depends on how your compromised systems can contain the damage and reduce further propagation.

The complex or segmented your systems become to achieve security, the attack surface should decrease accordingly or your security is inefficient enough.

ACL Management

Throughout the backup management lifecycle, maintaining and configuring ACL for each actor involved is an important part of securing your data backups. Protecting your ACL framework is a different topic that we will skip in this article, but having a weak ACL makes all your effort in securing the data backups useless.

Note: don’t lock yourself out of the system where neither the attacker nor you can access the data, which is as good as deleting the data.

The right ACL for your storage should make it immutable and air-gapped(at least during unused state).

When it comes to deleting or updating crucial data, it is recommended that the step requires consensus (usually unanimous) from the group of admins to be able to delete/update the data. This is not for deleting the data outside the configured retention period.

Though it may not be common for someone to delete data backups, this additional challenge makes sense only in a few cases.

Fig 4. Consensus for deleting crucial files.

Are data backups enough to protect one against ransomware?

Just relying on data backups is not enough, and regular scanning and assessment of data backup pipelines and data backup integrity checks are necessary. The backups should be tested to ensure that the restoration is as expected.

One must realize that securing data backups needs as much attention as your main application or product. The software and processes involved in making your data backups reliable are also susceptible to cyber-attacks. All the systems along with the data backup stations, should use proper monitoring tools, IDPS, network segmentations, encryption, and system operation tools to reduce the attack surface and improve transparency of the systems.

Security teams need to conduct regular training sessions to keep themselves up to date and prepare for any cyber-attacks. Simulating real-world scenarios using advanced threat simulation, red-blue teaming, and pen-testing are common training exercises.

Conclusion

So, software-1 is being protected by software-2, don’t you need another software software-3 to protect software-2? … and another software-4 ..

Not really. For example, consider the case of executing a program written in a high-level language like C++, It goes from compiler > assembly > Machine code > Instruction decoder > CPU. At each step, the abstraction is removed for processing the data. Similarly, for distributed systems, each service needs to have a dedicated objective.

Open-source backup management software such as Bacula, Amanda, etc. implement most of the security aspects and offer many features including automation.

Though data backups seem simple. It needs a good deal of attention for it to be a reliable data backup.